Scale-out File Server (SOFS) is a feature that allows you to create file shares that are continuously available and load balanced for application storage. Examples of usage are Hyper-V over SMB and SQL over SMB. SOFS works by creating a Distributed Network Name (DNN) that is registered in DNS to all IP’s assigned to the server for which the cluster network is marked as “Cluster and Client” (and if the UseClientAccessNetworksForSharedVolumes is set to 1, then additionally networks marked as “Cluster Only”). DNS natively serves up responses in a round robin fashion and SMB Multichannel ensures link load balancing.

If you’ve followed best practices, your cluster likely has a Management network where you’ve defined the Cluster Name Object (CNO) and you likely want to ensure that no SOFS traffic uses those adapters. If you change the setting of that Management network to Cluster Only or None, registration of the CNO in DNS will fail. So how do you exclude the Management network from use on the SOFS? You could use firewall rules, SMB Multichannel Constraints, or the preferred method – the ExcludeNetworks parameter on the DNN cluster object. Powershell to the rescue.

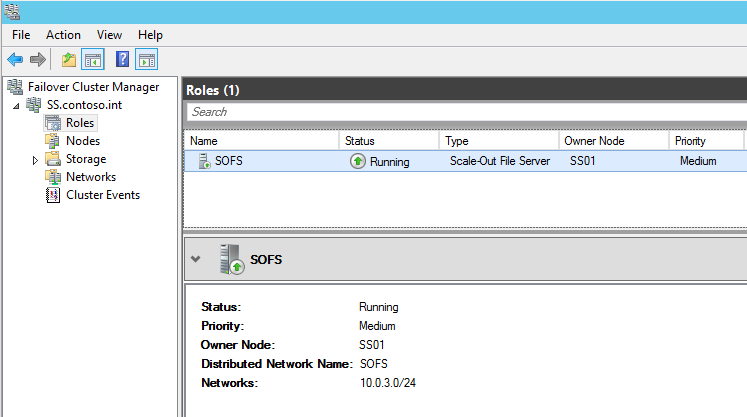

First, let’s take a look at how the SOFS is configured by default:

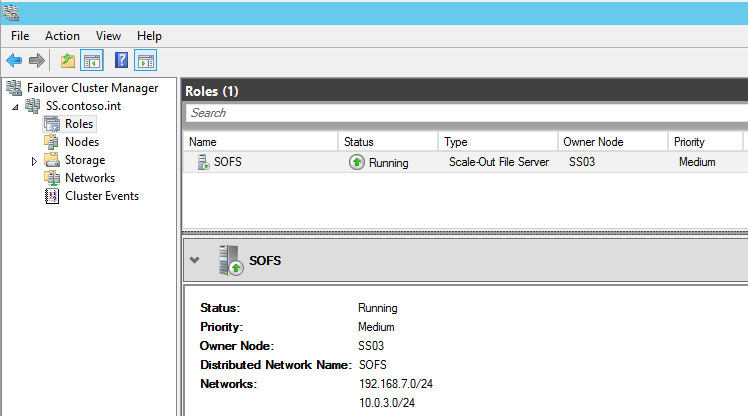

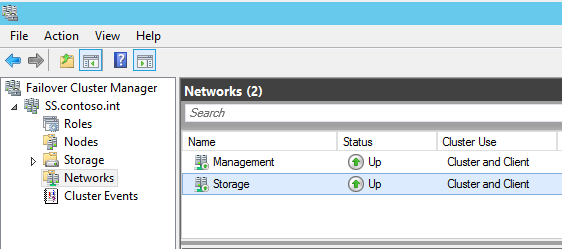

In my configuration, 192.168.7.0/24 is my management network and 10.0.3.0/24 is my storage network where I’d like to keep all SOFS traffic. The CNO is configured on the 192.168.7.0/24 network and you can see that both networks are currently configured for Cluster and Client use:

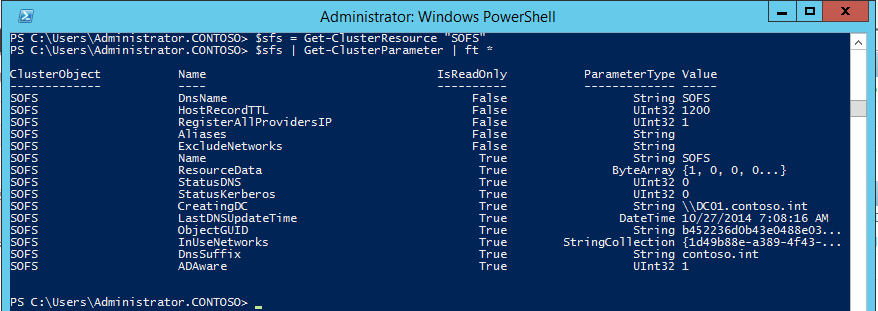

Next, let’s take a look at the advanced parameters of the object in powershell. “SOFS” is the name of my Scale-out File Server in failover clustering. We’ll use the Get-ClusterResource and Get-ClusterParameter cmdlets to expose these advanced parameters:

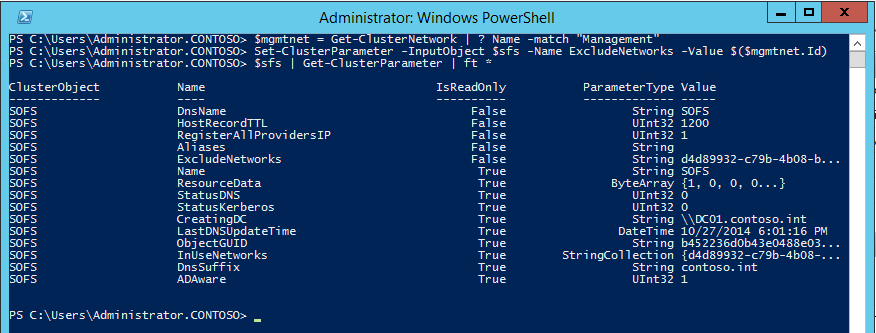

You’ll notice a string parameter named ExcludeNetworks which is currently empty. We’ll set that to the Id of our management network (use a semicolon to separate multiple Id’s if necessary). First, use the Get-ClusterNetwork cmdlet to get the Id of the “Management” network, and then the Set-ClusterParameter cmdlet to update it:

You’ll need to stop and start the resource in cluster manager for it to pick up the change. It should show only the Storage network after doing so:

Only IP’s in the Storage network should now be registered in DNS:

PS C:\Users\Administrator.CONTOSO> nslookup sofs

Server: UnKnown

Address: 192.168.7.21

Name: sofs.contoso.int

Addresses: 10.0.3.202

10.0.3.201

10.0.3.203

Hi,

In my sofs cluster I have 3 networks LAN and SMB1 and SMB2

Now I have Excluded the LAN from my SOFS resource and this worked fine

$sofs = Get-ClusterResource “sofs1”

$net = Get-ClusterNetwork | ? name -Match “LAN”

Set-ClusterParameter -InputObject $sofs -Name ExcludeNetworks -Value $($net.Id)

But now I want to undo this. but it all failed So I need some help.

I used the Set-ClusterParameter -InputObject $sofs -Name ExcludeNetworks -Value $($net.Id) -delete

Stopped the resource etc all failed.

How Can I undo the exclude network ?

Try setting the ExcludeNetworks to $null or “”

Hi, I don’t quite understand DNS part in all of this. I have two sofs servers with Rdma nics configured with internal subnet ipadresses. Do I have to have my own DNS server for this then? We have a central DNS server for “management network. And that is quite hard to get my internal ip addresses to get registerd.

DNS registration should happen automatically if your DNS supports dynamic updates. Otherwise, you’ll need to have the internal IP addresses manually registered in DNS for the cluster access point name.

Hi Jeff,

Do you have an idea how to make this work with VMM ?

My VMM server Is virtual, and all the hyper-v hosts are configured with RDMA nics on 2 vlans.

The sofs cluster is configured as you describes and also with the RDMA nics.

The “problem” is that its not possible to add the sofs to the vmm. the vmm server tries to connect to the “SMB network” when it scans for array and shares. No matter that clustername and ip of the sofs nodes are correctly configured on the clusternetwork ( where the vmm server also “lives”).

Thanks

Anker

I have a similar setup in my lab. I was able to get it working by adding a hosts file entry on the VMM server point the SOFS name to the root cluster object name.

I have a similar problem. I have 2 RDMA NICs on different VLANs on each storage server and each Vhost.

I want this to be a completely segregated network from all of the VM traffic and the Vswitches.

However, as you say to manage SOFS in VMM you need a connection to the storage layer.

The DNS is set to resolve the SOFS role (mine’s called BetaStorage1) to the 4 storage IPs so it forces the Vhosts to use the storage network.

I want VMM to use the slower management network but don’t want to change the DNS entries. Adding it to the VMM servers’ hosts files works, but will not provide redundancy as you can only ever enter one DNS entry per ip address in a hosts file.

I would want betastorage1 to resolve to the management NICs on both storage servers.

Any ideas?

The easy solution for your problem could be a load balancer in front of the ip that you provide in the hosts file.